The Modular Scaling Index is a framework for auditing core banking scalability before high-load infrastructure becomes a crisis. It measures a banking system’s readiness to scale from 100,000 to 10 million users by identifying architectural bottlenecks — what we call Scaling Debt — before they surface under production load.

Vacuumlabs developed this framework from direct experience building and transforming core banking systems across regulated markets. It covers five dimensions: data architecture, API design, service isolation, deployment model, and observability. Banks use it during pre-transformation assessments to prioritise where to modernise first.

Growth feels great until it doesn’t. Many core banking systems handle thousands of users smoothly but choke spectacularly beyond a few million transactions. It is because growth means onboarding more users every month, but scalability means those users don’t slow your transactions to a crawl or crash Friday night batches.

Banks increasingly see legacy cores as transformation blockers, yet few have frameworks to benchmark scalable core banking before high-load infrastructure becomes the crisis.

Here we’ll cover modular scaling, a practical tool you can use to audit system scalability, spot scaling bottlenecks early, and plan backend re-architecture that actually works at a 10M-user scale.

Why some core systems break at scale

So, here’s the thing: when a system hits that massive 10-million-user mark, the actual “laws of physics” for your software start to change. You’d think that if it works for 100k users, you just add more servers and call it a day, right? But that’s usually where the nightmare starts. Most performance bottlenecks in banking aren’t actually caused by “slow code”, it’s usually because of architectural choices made years ago that seemed fine at the time.

That friction is what we call “Scaling Debt.” It’s like a hidden credit card balance of architectural shortcuts. It stays quiet while you’re small, but the second you hit high-load infrastructure demands, the bill comes due with massive interest.

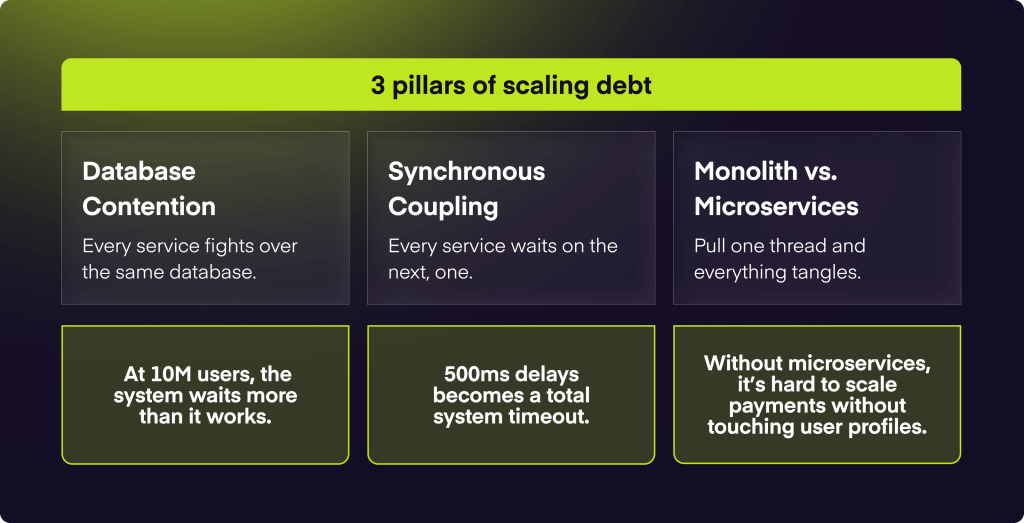

The three pillars of scaling debt

- Database Contention: In a legacy core banking monolith, every single service is basically fighting over the same database locks. At 10M users, the system spends more time just waiting for a “turn” to write data than actually processing transactions. That’s why you see those random Friday night system hangs; it’s one of the classic scaling bottlenecks.

- Synchronous Coupling: If Service A has to wait for Service B to finish before it can move on, your total latency is just a giant pile of every bottleneck in the chain. At scale, a tiny 500ms lag in a sub-service cascades into a total system timeout. Like, one slow API call and the whole house of cards falls over.

- Monolith vs Microservices: You would think a monolith is simpler, and for a small team, it is! But when you’re talking about system scalability, a monolith is like a giant ball of yarn, you can’t pull one string without tangling the rest. Moving to microservices allows you to scale just the “payments” part without needing to scale the entire “user profile” database too.

What does modular scaling assessment entail?

So, what is modular scaling exactly? You can think of it as a scalability framework that gives you a roadmap instead of just guesswork. It’s a way to benchmark if a core is ready for real growth across dimensions like throughput, latency, and resilience.

It is a scalability benchmarking tool that helps CTOs and engineers stop guessing. It helps you spot exactly where the system is going to choke, so you can improve your architectural scalability on time.

A core has to prove it handles the “heavy lifting” across a few key areas:

- Decomposition & Loose Coupling

- Statelessness & Horizontal Scaling

- Data Management (Sharding & CQRS)

- Event-Driven Resilience

Most systems struggle during a scalability assessment because they were built for features, not for performance engineering. With modular scaling assessments, the focus is back to operational best practices: automating tasks, robust monitoring, and building in fault tolerance before the system ever hits production.

The key dimensions of scalable architecture

Moving on to what actually makes these systems tick. It’s not just one thing, but a mix of architectural choices that prioritize high availability. You’d think throwing more hardware at the problem would be the fix, but without a solid modular architecture, you’re just paying for a bigger, more expensive mess.

Here’s what a true cloud-native core looks like:

1. Throughput & horizontal scaling

Throughput is basically your system’s “breathing room.” To handle 10M+ users, you need horizontal scaling. This lets you add ten, twenty, or a hundred small servers instead of one giant, expensive box that eventually hits a physical limit. For that to work, your scalable core banking system has to be stateless, meaning any server can pick up any request without needing to know “who” it just talked to.

2. Low-latency systems & asynchronicity

In high-load banking, every millisecond counts. If your system is “synchronous”, meaning it waits for one task to finish before starting the next, you’re basically building a digital traffic jam. Low-latency systems rely on asynchronous task processing. They decouple the “request” from the “result” using message queues (like Kafka), so the user isn’t staring at a spinner while the ledger updates.

3. Elasticity

Scaling up is great, but you also need to scale down. Elasticity lets your infrastructure expand for the morning rush and shrink at 3:00 AM. This doesn’t just save money, but it also prevents “resource exhaustion,” where your background processes get bogged down by stagnant, over-allocated nodes.

4. Resilience & fault tolerance

You have to assume things will break. By building in redundancy and failover mechanisms, if one “module” goes down, the rest of the system stays online. That’s the difference between a 10-minute glitch and a 4-hour front-page news outage.

5. Modular independence

This is where the “Modular” in MSI comes from. You want to be able to scale your “Payments” module during a holiday sale without having to touch your “User Profile” or “Reporting” modules. That independence is what stops a bottleneck in one corner of the app from dragging down your entire high availability setup.

Real scalability takes serious performance engineering to simulate these high-load scenarios before they happen in production. You have to test your code paths to make sure the system doesn’t just “run,” but actually thrives when the traffic spikes.

Monolith vs modular core: What actually scales

When we talk about monolith vs microservices, it’s not about “good vs. evil.” A monolith is actually great for small teams. It’s simple to deploy and easy to test. But the second you hit that 10M-user mark, that “simple” setup turns into a different story.

The problem is that in a monolith, everything is glued together. If you want to fix a tiny bug in your “Reporting” tool, you have to redeploy the entire bank. That’s why modular core banking is the standard for hyper-growth. It’s about decoupling risks so you only scale or fix what’s actually under pressure.

The practical trade-offs

So, you would think you can just “scale up” forever, but eventually, you hit a wall where no amount of performance engineering can save a rigid monolith. A backend re-architecture toward a modular setup is a huge lift, but it’s the only way to make sure your infrastructure can “breathe” as your user base explodes.

How to assess if your core is ready

If you’re wondering whether your current setup will hold up under a sudden spike in traffic, you’re already asking the right questions. But “hope” isn’t a strategy. To avoid a crisis, you need a cold, hard scalability assessment of your existing infrastructure.

Here is a practical checklist for a backend scalability audit to see if your core can actually handle the pressure:

The diagnostic checklist

Can you scale just one thing? If your “Payments” volume triples, do you have to scale the entire database and every background worker, or can you just spin up more Payment nodes? If you can’t scale them independently, you’re stuck with a monolith bottleneck.

What happens when a sub-service hangs?

If your SMS gateway or a third-party KYC provider slows down, does it “block” your core ledger threads? You need to ensure you have failure isolation (like circuit breakers) so one slow integration doesn’t tank the whole bank.

Are your “Friday Night Batches” getting longer?

Check your processing windows. If your daily reconciliations are starting to take 6 hours instead of 2, you’ve hit a data contention wall. Truly scalable systems move away from “batches” toward real-time event streaming.

Can you deploy on a Tuesday at 2 PM?

If your team is terrified of deploying during peak hours, your architecture isn’t resilient enough. A scale-ready system uses “canary releases” or “blue-green deployments” to update parts of the core without any downtime.

How long does it take to spin up a new environment?

If it takes weeks to set up a new staging environment, your infrastructure isn’t code. Modern core modernization relies on Infrastructure as Code (IaC) to let you scale globally in minutes rather than months.

Most teams realize they need core modernization about six months too late. If you’re seeing “lock wait” timeouts in your database or your API latency is creeping up by even 50ms every month, that’s the system telling you it’s reaching its physical limit.

When to modernise your core

When is the right time to initiate a core modernization strategy? It’s a tough call. Move too early, and you’re overpaying for capacity you don’t need. Move too late, and you’re trying to change the engines on a plane that’s already on fire.

The “tipping point” is usually a matter of cost. A 2025 whitepaper by RS2 reveals that banks spend up to 70% of their IT budgets just to keep old, legacy systems running. This can block digital transformation, causing banks to be unable to meet modern customer expectations.

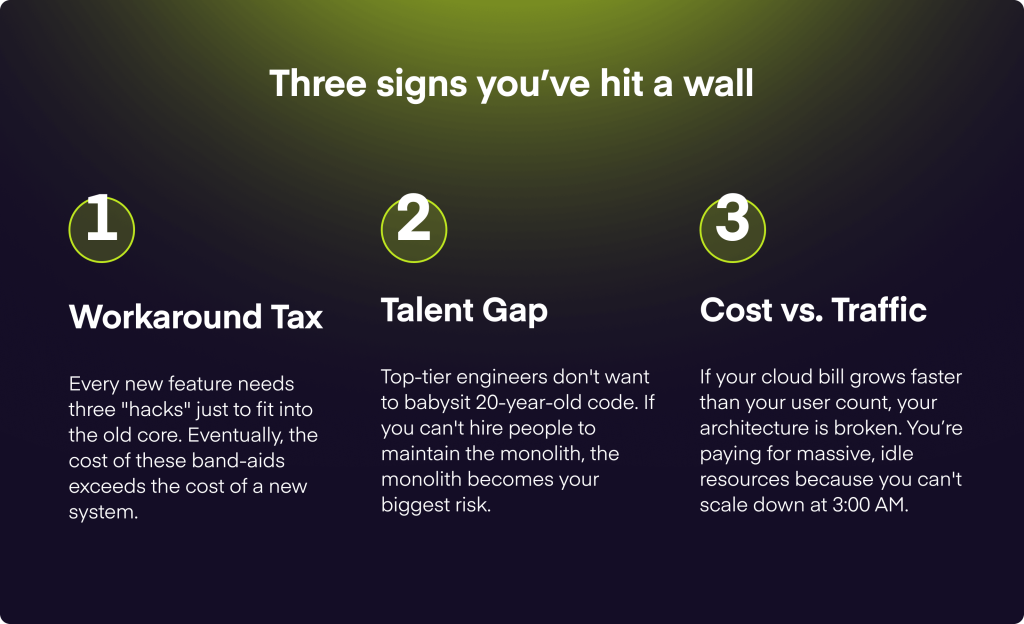

The three signs you’ve hit a wall

- Workaround Tax: Every new feature needs three “hacks” just to fit into the old core. Eventually, the cost of these band-aids exceeds the cost of a new system.

- Talent Gap: Top-tier engineers don’t want to babysit 20-year-old code. If you can’t hire people to maintain the monolith, the monolith becomes your biggest risk.

- Cost vs. Traffic: If your cloud bill grows faster than your user count, your architecture is broken. You’re paying for massive, idle resources because you can’t scale down at 3:00 AM.

Tip from us

You don’t have to do a “big bang” migration. A smarter fintech transformation usually relies on an incremental architecture. Basically, you keep the legacy core for stable, low-touch tasks but move high-growth features (like real-time payments) to a new, modular ledger. You gradually “hollow out” the old system until it’s small enough to decommission safely. This approach reduces the massive risks of a legacy system migration and gives you a much faster return on your investment.

What to look for in a scalable backend partner

Scalability isn’t just a design choice. Many vendors can build a digital product, but few have actually operated high-load, regulated systems under pressure. When looking for a fintech core modernization partner, you need to look past the pitch deck and audit their “scars.”

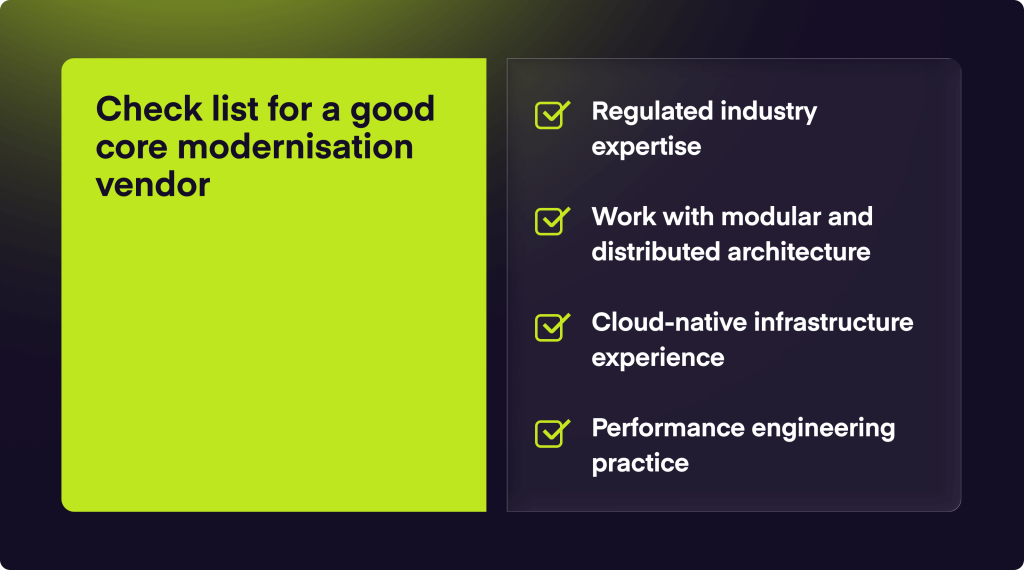

So when you are about to select a high-load system development company, consider these non-negotiable criteria:

- Regulated industry expertise: Deep familiarity with banking and payment services is mandatory. Compliance and data sovereignty must be built into the code, not added as an afterthought.

- Modular and distributed architecture: A partner must demonstrate how they decouple services. Our work is based on the principle that eliminating single points of failure is the only way to ensure long-term stability.

- Cloud-native infrastructure: Hands-on expertise in Kubernetes and autoscaling is essential. It’s the difference between a system that scales efficiently and one that simply runs up a massive cloud bill.

- Performance engineering practice: There should be a dedicated focus on stress testing and observability.

The right partner provides a roadmap that prevents future vendor lock-in. Ensure your scalable infrastructure consulting includes a plan for long-term technical autonomy.

How many users can a core banking system handle?

Capacity depends on concurrency, not just total accounts. While legacy monoliths often hit bottlenecks at 100,000 concurrent sessions, modern core banking infrastructure built on distributed systems can handle millions of users. Scalability is achieved by decoupling services so that high-traffic features (like balance checks) don’t impact the performance of the central ledger.

What makes a backend architecture scalable?

A scalable architecture can handle increased load simply by adding hardware resources without requiring code changes. This requires three things: decoupling (independent services), statelessness (allowing any server to handle a request), and load balancing to distribute traffic across a cluster.

When does a monolith stop scaling?

A monolith stops scaling when “scaling up” (adding more RAM or CPU) no longer improves performance. This usually happens due to database contention or “spaghetti code” that makes deployment too risky. At this point, the “maintenance tax” exceeds the cost of migration, and the system must transition to a distributed architecture to remain competitive.

What is horizontal vs vertical scaling?

Vertical scaling is adding more power to one machine. It has a hard physical limit. Horizontal scaling is adding more machines to a network. For banking, horizontal scaling is the standard because it enables it to scale out during peak hours and scale in when quiet, which significantly reduces cloud infrastructure costs.